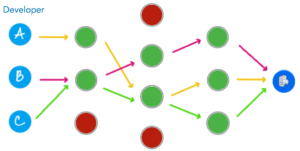

In part one of my Continuous Delivery blog series, I discussed the costs and flexibility of change and how we might reduce cost and decrease the complexity of changes. While Test-Driven Development will undoubtedly improve software quality and increase the ability to adapt to change, there is still the matter of handling concurrent change. After all, we ARE trying to be agile. How might we handle multiple changes, defects, and enhancements?

First and foremost, the code in production must always be supportable. Code freezes or cold periods are not agile. So we must do everything possible to maintain the line for production support. Upgrading and spending hours getting a development environment suited for support and switching back for other changes is non-productive and frustrating. Maintaining a multi-tiered environment is expensive, and deployments are confusing.

To that end, have you ever wondered what will happen to your custom software with the dependencies like a framework or a SAP Solution? Wouldn’t it be nice to test all of your custom code in an automated fashion?

Continuous Integration (CI)

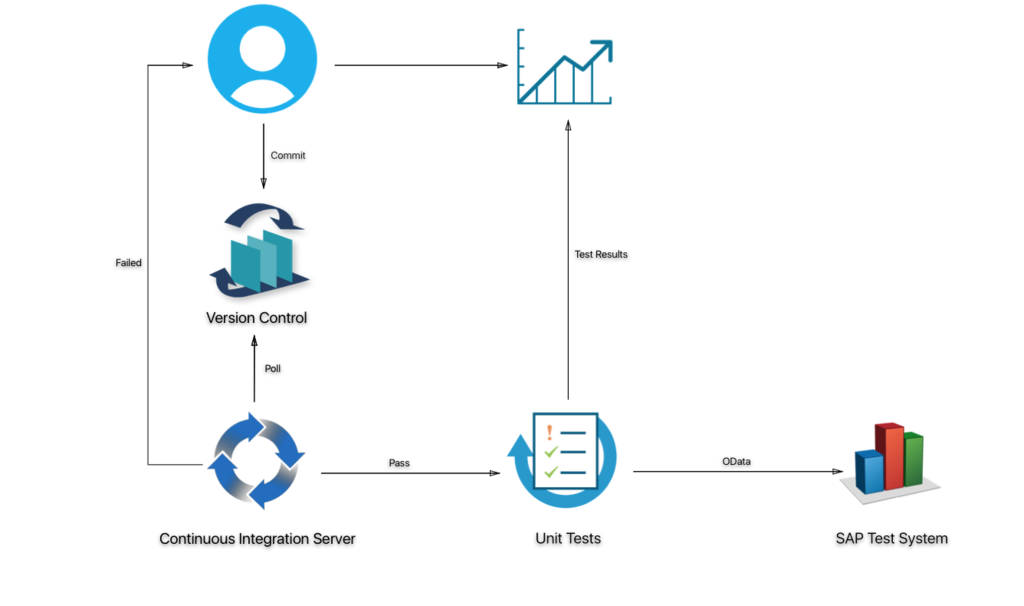

All of the unit and integration tests could be run in an automated fashion, and you could get a report of anything that failed. Running these daily ensures that your code is always being tested, identifying fragile tests and identifying defects introduced before it gets in the hands of the tester. Pair this with the paradigm of “releasing early and often” to minimize risk and shorten the time to resolve defects.

Continuous integration is meant to automate builds by integrating dependencies, building your custom solution, running unit tests, running integration tests, and reporting any failures. Failures would result in the development team figuring out the issue until “the build and tests pass.”

Continuous Delivery (CD)

Continuous delivery is one step beyond Continuous Integration. This would be when a development release workflow is honed down to allow rapid small deployments. More often than not, very mature Agile Practitioners have reached this level of delivery.

In order to achieve this, a successful Continuous Integration Server and a well-defined deployment pipeline would be in place. The Deployment Pipeline is a set of steps to deploy a release or patch rapidly (and as automated as possible). More often than not, the causes of deployment glitches are human-related, whether it be process inflicted, environmental differences, or human error. This requires close inspection of current deployment processes and careful analysis of the events that lead to flawed or degraded user experiences post-deployment.

To be successful with Continuous Delivery, an organization must trust the automation, abilities, and discipline of people upstream and embrace the DevOps culture.

Stay tuned for my next blog where I will talk about DevOps, where operations and developers work together, and see what happens as we begin to blur the lines between the two.

View our LinkedIn, here.